In the space of ten days in April 2026, three of the four most capable AI models ever built shipped within weeks of each other. Claude Opus 4.7 landed on April 16. GPT-5.5 arrived on April 23. Gemini 3.1 Pro had been in developer preview since February, quietly improving while the other two traded press releases.

The benchmarks are a mess. Every lab publishes the numbers that make their model look best. OpenAI emphasises terminal performance. Anthropic leads with coding. Google highlights reasoning and context window. They're all telling the truth about something. None of them are telling you what you actually need to know.

So here's an attempt at that.

What Each Model Is Actually Built For

The three models are not competing for the same user anymore. That's the most important thing to understand about the current landscape — the gap has shifted from raw intelligence to specialisation.

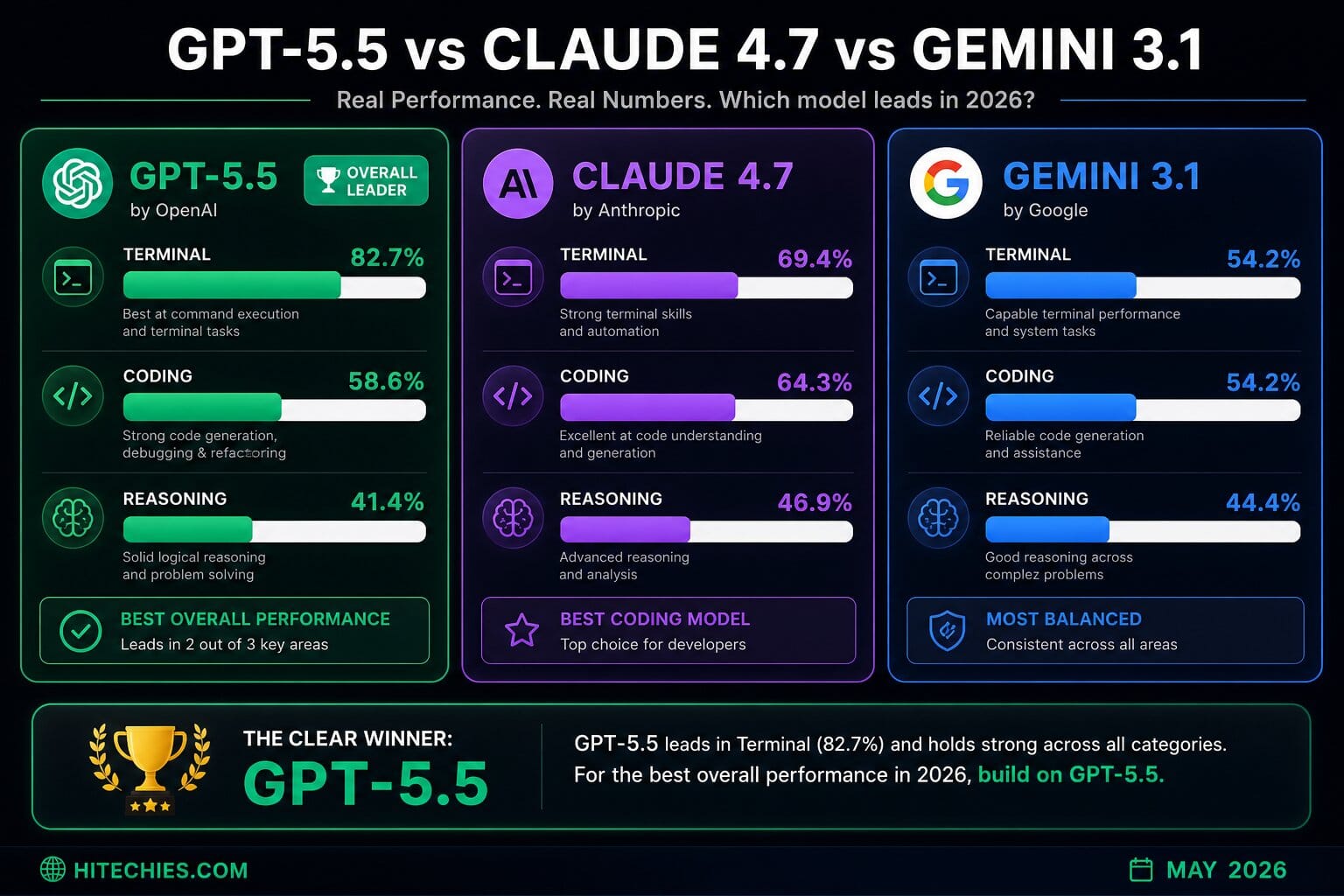

GPT-5.5 is OpenAI's bet that the future of AI is autonomous agents doing real computer work. The model scores 82.7% on Terminal-Bench 2.0, which tests command-line workflows, shell scripting, container orchestration, and tool chaining. That's not a benchmark designed for a chatbot. It's designed for a model that lives in a terminal and executes things. If you're building agents that need to interact with operating systems, run commands, and chain tools reliably, GPT-5.5 is currently the strongest choice for that specific workload.

What it's less good at: complex multi-step reasoning. On Humanity's Last Exam — the benchmark that tests whether a model can solve graduate-level problems without any external tools — GPT-5.5 scores 41.4%. That puts it third of the three models being compared here.

Claude Opus 4.7 is where you go when correctness matters more than speed. On SWE-Bench Pro — the benchmark that tests real GitHub issue resolution across actual production codebases — Opus 4.7 scores 64.3%. GPT-5.5 scores 58.6%. Gemini 3.1 Pro scores 54.2%. That 5.7 point gap over GPT-5.5 represents a meaningful difference in how often the model ships code that actually works versus code that looks plausible but breaks in edge cases.

The CEO of Cursor, Michael Truell, reported that Opus 4.7 "lifted resolution by 13% over Opus 4.6" on their internal benchmark — a 93-task test built from real-world developer problems. The model apparently solved four tasks that neither the previous Opus nor Sonnet 4.6 could touch.

Opus 4.7 also leads on tool use and MCP integration, scoring 77.3% on MCP-Atlas — the benchmark closest to measuring real production agent behaviour with multi-turn tool calls. This matters if you're building systems where the agent needs to orchestrate multiple services across a long session.

Gemini 3.1 Pro is Google's cost-performance play, and the numbers justify the positioning. At $12 per million output tokens, it's 60% cheaper than Claude Opus 4.7 ($30/M) and 75% cheaper than GPT-5.5 Standard ($60/M). And it's not paying for that price advantage with incompetence: Gemini 3.1 Pro scores 44.4% on HLE and 54.2% on SWE-Bench Pro. Those numbers are within 2.5 points of Opus 4.7 on reasoning, not five times better — which is what you'd need to justify the price difference.

Where Gemini stands alone is context. The 1-million-token context window is genuinely useful for codebases, long documents, and research workflows. GPT-5.5 offers 256K. That's sufficient for most tasks but limiting if your agents are working across large repositories or analysing long document sets simultaneously.

The Benchmarks Nobody Agrees On

It would be convenient if these models had a single number you could compare. They don't.

On GPQA Diamond — graduate-level knowledge in physics, chemistry, and biology — Gemini 3.1 Pro scores 94.3%, Claude Opus 4.7 scores 94.2%, and GPT-5.5 scores 93.6%. That's a 0.7 point spread. You would need to run a very large number of tasks to see that difference manifest in practice.

On HLE with tools enabled — where models have access to code execution and search — the order shifts: Claude 54.7%, GPT-5.5 52.2%, Gemini 51.4%. Claude pulls ahead when it has resources to work with.

On FrontierMath, testing advanced mathematical reasoning, GPT-5.5 Pro wins clearly. If your application involves financial modelling, scientific computation, or formal verification, this is the benchmark that matters — and GPT-5.5 currently leads it.

The honest interpretation of all this data: these models are genuinely close on most tasks. The differences are real but narrow. Making a choice based on headline benchmarks is less important than making a choice based on your actual workload.

The Pricing Reality

This is where the decision often gets clearer than the benchmarks.

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| GPT-5.5 Standard | $15 | $60 |

| Claude Opus 4.7 | $15 | $75 |

| Gemini 3.1 Pro | $2 | $12 |

At any kind of volume, Gemini's cost advantage becomes significant. Routing everything through GPT-5.5 Standard because it's the newest model costs 5x more per output token than Gemini 3.1 Pro for tasks where Gemini performs within 2-3 percentage points on the benchmarks that matter.

The developers getting the best results right now are not picking one model and being loyal to it. They're routing by task type.

A Practical Routing Framework

Based on where the benchmarks actually land:

Use Claude Opus 4.7 when: writing, reviewing, or debugging production code; complex multi-file reasoning; multi-turn tool orchestration; anything where correctness matters more than raw speed.

Use GPT-5.5 when: building agents that operate in terminals or browser environments; tasks involving command-line workflows; long-document retrieval where the 256K-vs-1M difference doesn't apply; mathematical reasoning applications.

Use Gemini 3.1 Pro when: high-volume production workloads where cost compounds; RAG pipelines; summarisation; classification; research tasks requiring large context windows; anything where Gemini's near-parity on reasoning is sufficient and you're paying per token.

The developers who treat model selection as a routing problem — not a loyalty problem — will ship better products at lower cost. An IDC analyst framed this plainly: "No single model wins everywhere, which is healthy for the ecosystem and gives developers real choices based on specific needs."

One More Thing Nobody's Saying Loudly

There's a fourth model that dropped the same day as GPT-5.5 and deserves mention: DeepSeek V4, which shipped on April 23 with open weights and API pricing that, in the words of one developer who tested all four, "felt like a typo."

For teams with the infrastructure to run open-weight models, DeepSeek V4 changes the calculus further. The compute war driving prices up among the closed-model providers doesn't apply if you're running inference yourself. That's a different article. But if you're evaluating the frontier in May 2026, the frontier includes models you can download and run on your own hardware.

The market is moving fast enough that this comparison will need updating in six months. The model you pick today is not a permanent decision. Build your integrations loosely, verify your assumptions on your actual workload, and treat the benchmark numbers as starting points rather than verdicts.