In early April 2026, the CEO of a software platform called Retool told the Wall Street Journal something that cut through months of AI hype with unusual clarity.

He said he preferred Anthropic's Claude for his company's work. He switched to OpenAI anyway.

The reason wasn't a better model. It wasn't pricing. It was that Anthropic's service kept going down.

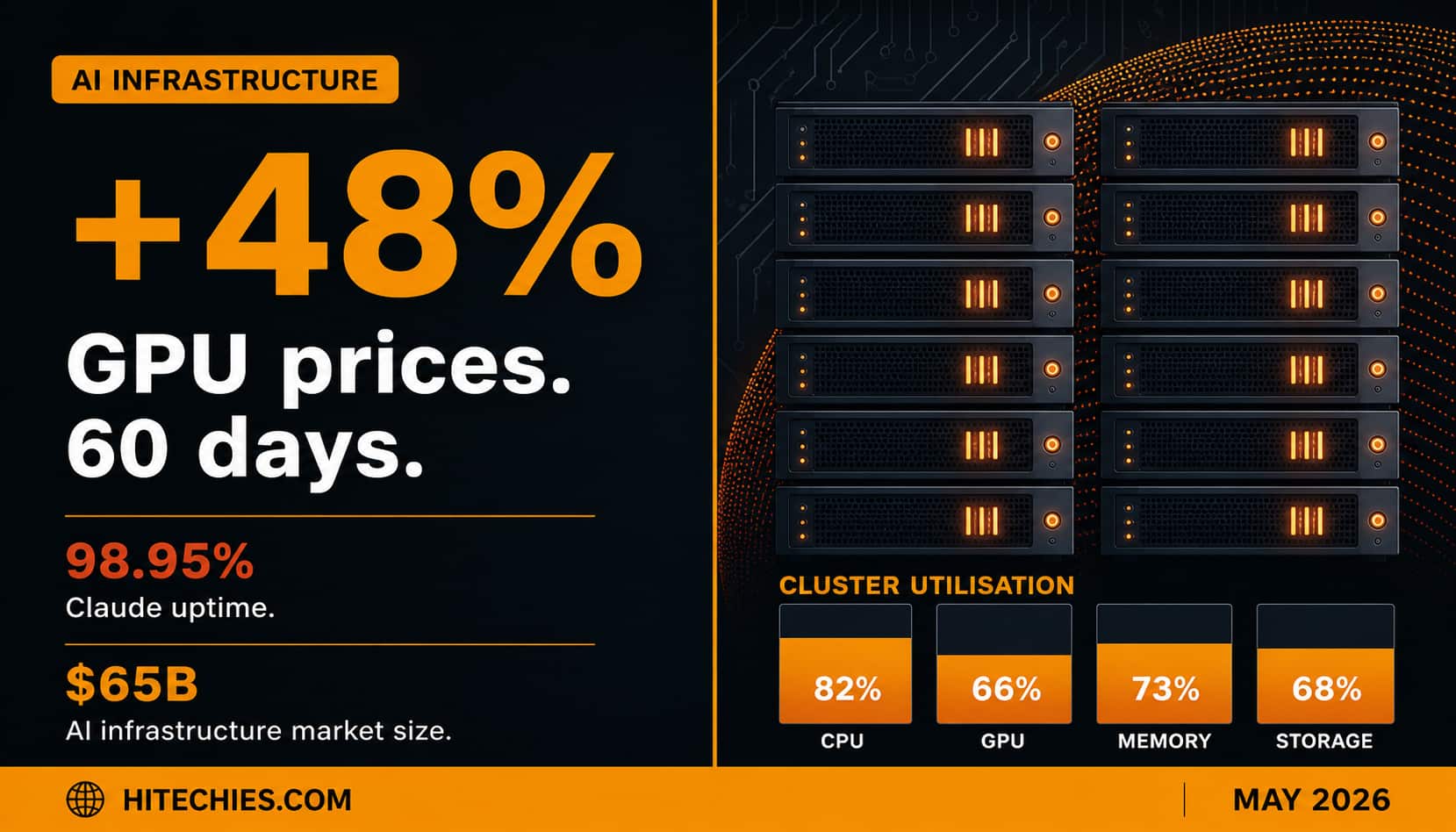

Over the 90 days ending April 8, Claude's API uptime sat at 98.95%. That number sounds almost acceptable until you compare it to the 99.99% uptime that established cloud providers typically maintain. In practice, 98.95% uptime means roughly 7.5 hours of downtime per month for a service that enterprise customers are increasingly embedding into their core workflows. David Hsu didn't leave because he found a better model. He left because he needed a service that was actually there when his customers needed it.

That story is a small window into something much larger happening across the entire AI industry in the first half of 2026.

What's Actually Running Out

The shortage isn't theoretical. Token usage across major AI providers jumped from 6 billion to 15 billion per minute between October 2025 and March 2026. GPU prices on the spot market climbed 48% in two months — one hour on a latest-generation Blackwell chip now costs $4.08, up from $2.75 in February. Bank of America analysts expect demand to outstrip supply through at least 2029.

OpenAI's CFO Sarah Friar was unusually direct about the situation: "We're making some very tough trades at the moment on things we're not pursuing because we don't have enough compute."

One of those trades became public in March when OpenAI announced it was shutting down Sora, its video generation product, to free up compute resources for coding tools and enterprise products. The decision to kill a highly publicised product because you physically can't run it and your other services simultaneously is not a routine business pivot. It's a signal about how tight the constraints actually are.

The same week, Anthropic adjusted its usage limits and started offering double usage during off-peak hours to spread load more evenly — a classic technique for managing infrastructure that can't meet peak demand. Windsurf replaced its credit system with daily and weekly quotas. OpenAI shifted Codex billing from flat message-based pricing to token-based metering.

The pattern is consistent: every major provider simultaneously telling their users, in different ways, that there are limits to how much compute they can consume.

Why This Is Structurally Different From Past Shortages

AI infrastructure shortages aren't new. What's different in 2026 is the layer where the constraint sits.

For the first two years of the current AI wave, the bottleneck was training compute — you needed vast GPU clusters to train frontier models, which were expensive and relatively rare. But training happens once. Once a model is trained, that cost is sunk.

The constraint that's binding now is inference compute — the compute required every time a model responds to a query. And inference doesn't happen once. It happens billions of times per minute, and the number keeps growing.

What's made this worse is the shift to AI agents. Traditional chat interactions are short — prompt in, response out. Agents are different. They run for minutes or hours, chaining tool calls, holding state, executing tasks. Vultr CEO J.J. Kardwell described the current capacity crisis to the WSJ as "unlike anything he has seen in more than five years." The bottleneck isn't just GPUs anymore. CPU usage in AI data centres has grown so dramatically that Microsoft sold out all of its extra CPU capacity to Anthropic and OpenAI. Amazon tripled its annual CPU order volume and still can't keep pace. TSMC has announced it will only meet 80% of server CPU wafer orders this year.

Available power capacity through 2026 is already spoken for. That's not a metaphor. The grid capacity that could theoretically support new data centre construction has been booked. Physical infrastructure — substations, cooling systems, power contracts — takes years to build. The software demand curve is not on the same timeline as the physical infrastructure curve.

What $65 Billion in Deals in One Week Means

On April 24, 2026, Google announced it would invest up to $40 billion in Anthropic, lifting the company's valuation to $350 billion and locking in 5 gigawatts of dedicated compute capacity on Google's TPU infrastructure. This came days after Amazon expanded its own commitment to as much as $25 billion.

In one week, Anthropic secured roughly $65 billion in pledged equity capital and 10 gigawatts of reserved AI training power.

For context: 5 gigawatts of compute is roughly equal to the peak summer load of metropolitan San Francisco.

The deal tells you several things at once. It tells you how serious the compute shortage is, because this is what it costs to credibly address it. It tells you that the frontier is bifurcating into companies that own infrastructure and companies that rent it, and that the former have a structural advantage the latter cannot buy their way out of quickly. A former senior Google Cloud executive described the deal plainly: "Sundar is paying to make sure that the most credible non-OpenAI frontier lab does not drift into a Microsoft or Oracle camp. Forty billion is a lot of money. It is also less than what losing this anchor would cost."

And it tells you something about the nature of competitive moats in 2026. Algorithmic innovation is still crucial. But at the current scale, compute access is at least as important as model quality. Dylan Patel of SemiAnalysis has warned that "even tier two or tier three labs are going to be sold out of tokens." The economic value of capable models is growing faster than anyone's ability to serve them.

What This Means If You're Building

This is not abstract infrastructure news. It has immediate practical consequences for anyone building on top of AI APIs.

Reliability is now a product feature. If you're building a product that embeds AI into a workflow your customers depend on, the uptime of your AI provider is now part of your product's reliability story. The Retool situation is not unique. Anthropic's 98.95% uptime has been losing them enterprise customers to OpenAI. When you're evaluating providers, uptime statistics and SLA commitments deserve the same scrutiny as benchmark scores.

Multi-provider architecture is no longer optional. The developers who built tight integrations with a single provider and assumed that provider would always be available are the ones getting paged at 2am when an outage hits. Building with provider switching in mind — something tools like the ofox API proxy make trivially easy — is now a legitimate architectural decision rather than premature optimisation.

Compute costs are going to become part of unit economics conversations. The days when AI API costs were a rounding error in a startup's budget are ending. GPU prices up 48% in two months, token-based pricing replacing flat fees, premium tiers for heavy agentic workloads — the cost curve is moving in one direction. Teams that haven't modelled AI costs carefully are going to have uncomfortable conversations with their finance function.

Open weights are becoming a business continuity strategy. When closed API providers have rate limits, outages, and the ability to change pricing unilaterally, the argument for open-weight models like Llama, Mistral, and DeepSeek becomes less about capability and more about control. "You're building on rented land" has always been true of closed API dependencies. The compute crisis makes that metaphor more literal.

The Longer View

Tom Tunguz, a veteran tech investor, summarised the current situation in a post that circulated widely among founders in April: access to the bleeding edge is "becoming a gated privilege, for both capacity and security."

He listed five hallmarks of the compute scarcity era: relationship-based selling where state-of-the-art models may no longer be open to everyone; pricing that favours capital-heavy companies; available-but-slow access even when you can pay; and a structural advantage for companies that generate strong enough revenue to secure dedicated capacity.

That's a significant change from the narrative of the last two years, where the story was essentially one of democratisation — AI getting cheaper, more capable, and more accessible for everyone.

The compute war doesn't end that story. But it complicates it in ways that matter for anyone trying to build a business on top of AI infrastructure they don't own.

The companies securing 5-gigawatt compute deals today are writing the terms that everyone else will be operating under for the next decade. That's worth understanding before you need to.