The email looked perfect.

The sender's name matched the CFO's name in the company directory. The tone was right — slightly terse, slightly urgent, the way busy executives write. It referenced a real acquisition the company had been discussing internally, which the attacker had scraped from a LinkedIn post two weeks earlier. It asked the finance director to initiate a wire transfer to a new vendor account before the close of business. The amount was large enough to matter but not so large that it would automatically trigger an approval workflow.

There was no malware in the email. No suspicious attachment. No misspelt words, no awkward phrasing, no tell that would have tripped a spam filter or made a trained employee pause.

The email was written by a language model in about four seconds.

The finance director transferred the money. This scenario, with minor variation, is now happening at a rate that no central authority fully tracks — because the attacks that succeed leave companies more concerned with managing the damage than reporting the details. What gets reported is enough to establish the shape of the problem. The shape is not reassuring.

The Numbers Changed

The phishing email was the first place where most people learned to recognise AI-enhanced attacks, because it's the most visible. It is also where the data is most stark.

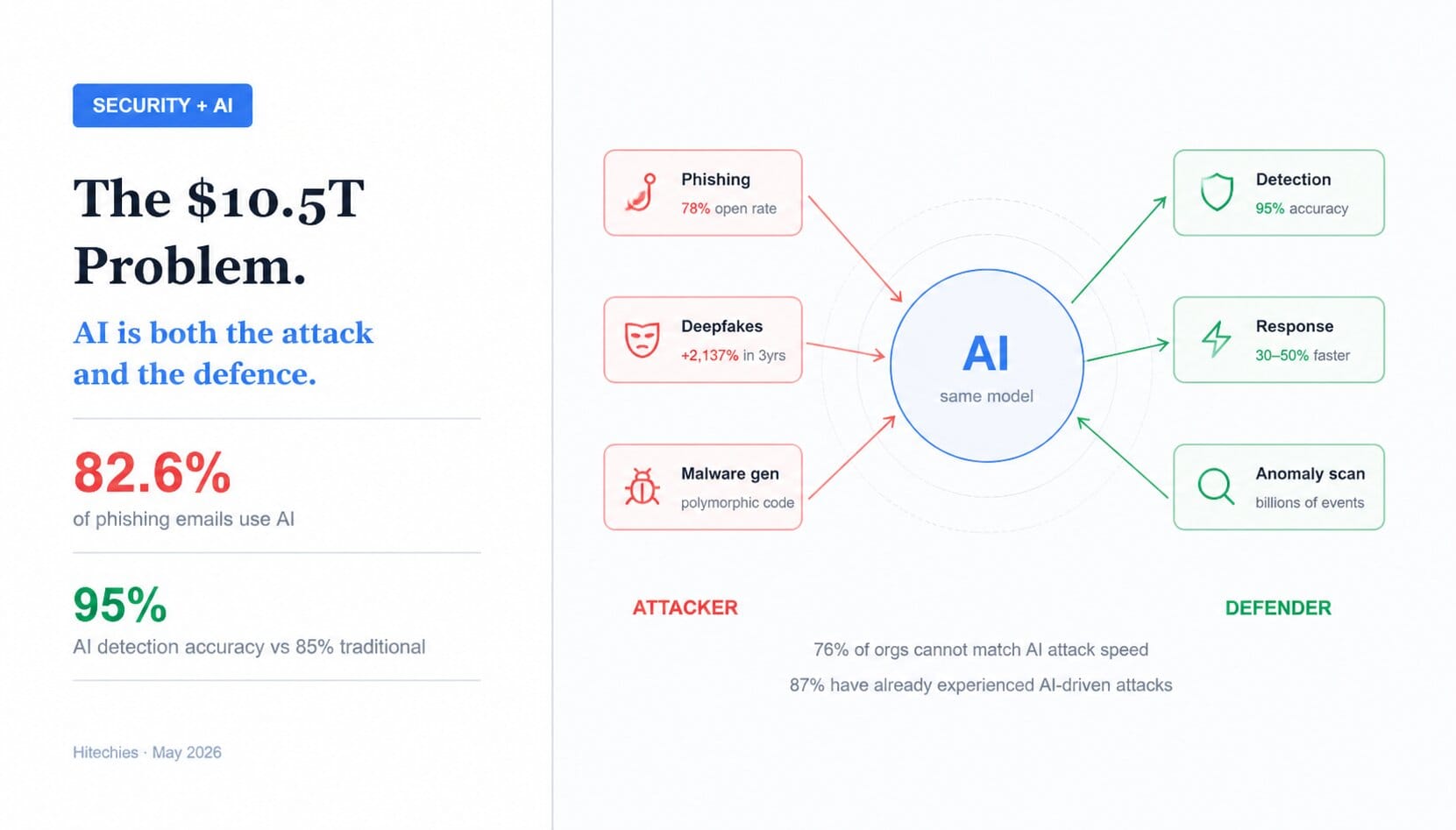

82.6% of phishing emails now incorporate AI in some form, according to research from DeepStrike. AI-generated phishing emails achieve a 78% open rate and a 21% click-through rate — nearly double the response rates of professionally crafted marketing emails. Think about that comparison for a moment. The most carefully optimised legitimate marketing email, written by human copywriters and refined through A/B testing, gets clicked at roughly half the rate of an AI-generated phishing attempt.

The reason is structural. Human-written phishing emails used to be identifiable by their flaws: the generic greeting, the mismatched tone, the grammatical slip that suggested a non-native English speaker. AI removes all of that. The language reads naturally. The content is personalised. The tone matches the person being impersonated. And because the messages are uniquely generated for each target, signature-based filters — which look for patterns repeated across many messages — cannot catch them.

87% of organisations worldwide experienced AI-driven cyberattacks in the past year. 85% reported some form of deepfake-related incident. Deepfake fraud attempts increased 2,137% over three years, according to Signicat's research. At the peak of 2024, a deepfake attack was occurring somewhere every five minutes.

What AI Lets Attackers Do That They Couldn't Before

Understanding the threat requires understanding what the technology actually changes about the economics of attack.

Previously, sophisticated social engineering attacks — the ones that required research on targets, personalised messaging, convincing impersonation — were expensive to execute. They required skilled human operators who could only run a limited number of simultaneous campaigns. This naturally limited their scale. The well-crafted CEO fraud email that convinces a finance director to wire funds was a high-effort, high-reward operation deployed selectively.

AI changed the cost structure. Reconnaissance that previously took a skilled analyst days can now be automated in hours: scraping LinkedIn profiles, company websites, press releases, social media, public filings, and conference appearances to build a detailed picture of targets and their relationships. Personalised messages can be generated at industrial scale from that data. Voice cloning — using a few minutes of publicly available audio — can synthesise a convincing call from a trusted person.

41% of zero-day vulnerabilities in 2025 were discovered through AI-assisted reverse engineering by attackers. The financial sector experienced a 47% year-over-year increase in AI-enhanced malware. 76% of organisations cannot currently match AI attack speed — meaning even when defenders identify what's happening, they often cannot respond fast enough to prevent damage.

The most alarming single statistic from Darktrace's State of AI Cybersecurity 2026 report: 92% of security professionals are concerned about the impact of AI agents. Not AI as a tool, but AI agents — autonomous systems that can chain decisions and actions together without human intervention at each step. The prospect of an autonomous attack agent that can identify targets, craft personalised approaches, execute the attack, and adapt in real time to defensive responses has not fully materialised at scale yet. The concern is that the gap between now and then is shorter than defenders would like.

The Same Technology Is the Best Defence Available

Here is where the picture becomes genuinely complex rather than simply alarming.

The AI that lets attackers generate thousands of personalised phishing emails is the same category of technology that lets defenders analyse billions of network events simultaneously and identify anomalies that no human analyst could catch. The gap between these two capabilities is not fixed — it is being contested in real time, with both sides deploying increasingly capable systems.

AI-powered security delivered 95% threat detection accuracy versus 85% for traditional methods, according to 2026 industry benchmarking. Incident response times dropped 30 to 50% in organisations that have fully integrated AI into their security operations. The global AI security market is projected to grow from $25.35 billion in 2024 to $93.75 billion by 2030, a 24.4% compound annual growth rate. Capital is flowing toward these tools because the ROI is measurable.

Anomaly detection is the clearest win for defenders. A human analyst reviewing network logs is pattern-matching against their experience and intuition, which is valuable but bounded. A machine learning model trained on an organisation's baseline behaviour can flag deviations at a granularity and speed that human analysts cannot match — and can do it across every network segment simultaneously. The systems that are making real differences in security outcomes in 2026 are not the AI that writes threat reports. They are the ones that silently watch every authentication event, every lateral movement, every unusual data access, and surface the 0.1% that warrant human investigation.

But there is a gap between what these systems can do and what most organisations have actually deployed. Only 5% of company leaders report having comprehensive deepfake attack prevention across multiple levels. 62% of small businesses faced AI-driven attacks in 2025 without having AI-powered defences to match. The organisations that are winning the AI security contest are the ones with the budget and expertise to deploy and tune these tools properly. For most companies, the asymmetry currently favours the attackers.

The Threat Nobody Is Fully Prepared For

The deepfake call and the AI-generated email are problems organisations can at least recognise and begin to address. There is a category of AI-enabled attack that is newer and harder to defend against: attacks on AI systems themselves.

Prompt injection — manipulating an AI system into ignoring its instructions by embedding adversarial content in the data it processes — is now a documented attack vector against enterprise AI deployments. RAG poisoning involves corrupting the data sources that AI systems retrieve from, causing them to return subtly incorrect or harmful outputs. Model tampering targets the AI systems themselves, attempting to alter their behaviour in ways that persist across interactions.

As organisations deploy AI agents into workflows — customer service bots, code review systems, document analysis tools, security monitoring systems — each of these represents a new attack surface that traditional security models were not designed to address. The question of how to apply security principles to AI components is not fully answered. The frameworks are developing. The attacks are developing faster.

What Changes the Equation

There is a version of this analysis that ends with a list of products to buy. That version is not this one.

The organisations that are navigating the AI security landscape most effectively in 2026 are doing something more fundamental: they have accepted that the threat model has changed and restructured accordingly. That means accepting that no employee, at any level, can reliably identify a sophisticated AI-generated phishing attempt by reading it. It means authentication frameworks that don't rely on a single signal. It means AI-powered monitoring because signature-based detection is insufficient against polymorphic AI-generated malware. It means testing incident response plans against realistic AI-enabled attack scenarios, not against the threat landscape of five years ago.

The $10.5 trillion annual cost of global cybercrime is not going down. Every credible forecast has it going up. What changes the trajectory is not any single tool or technique. It is the speed at which defenders adopt AI as seriously as attackers already have.

That adoption is happening. It is not happening fast enough, or evenly enough, to close the gap. The 76% of organisations that cannot currently match AI attack speed are the ones that will populate the breach reports of the next two years.