Let me start with something that sounds unfair but isn't. Vibe coding is genuinely incredible. Describing what you want in plain English and watching working software appear is not a gimmick — it's a real productivity shift that I use and you probably should too. Anyone telling you to just stop is selling you something more expensive than the problem.

But there's a difference between using a tool well and shipping whatever it generates. Right now a significant slice of the industry is doing the latter, and the data on what that produces has stopped being ambiguous.

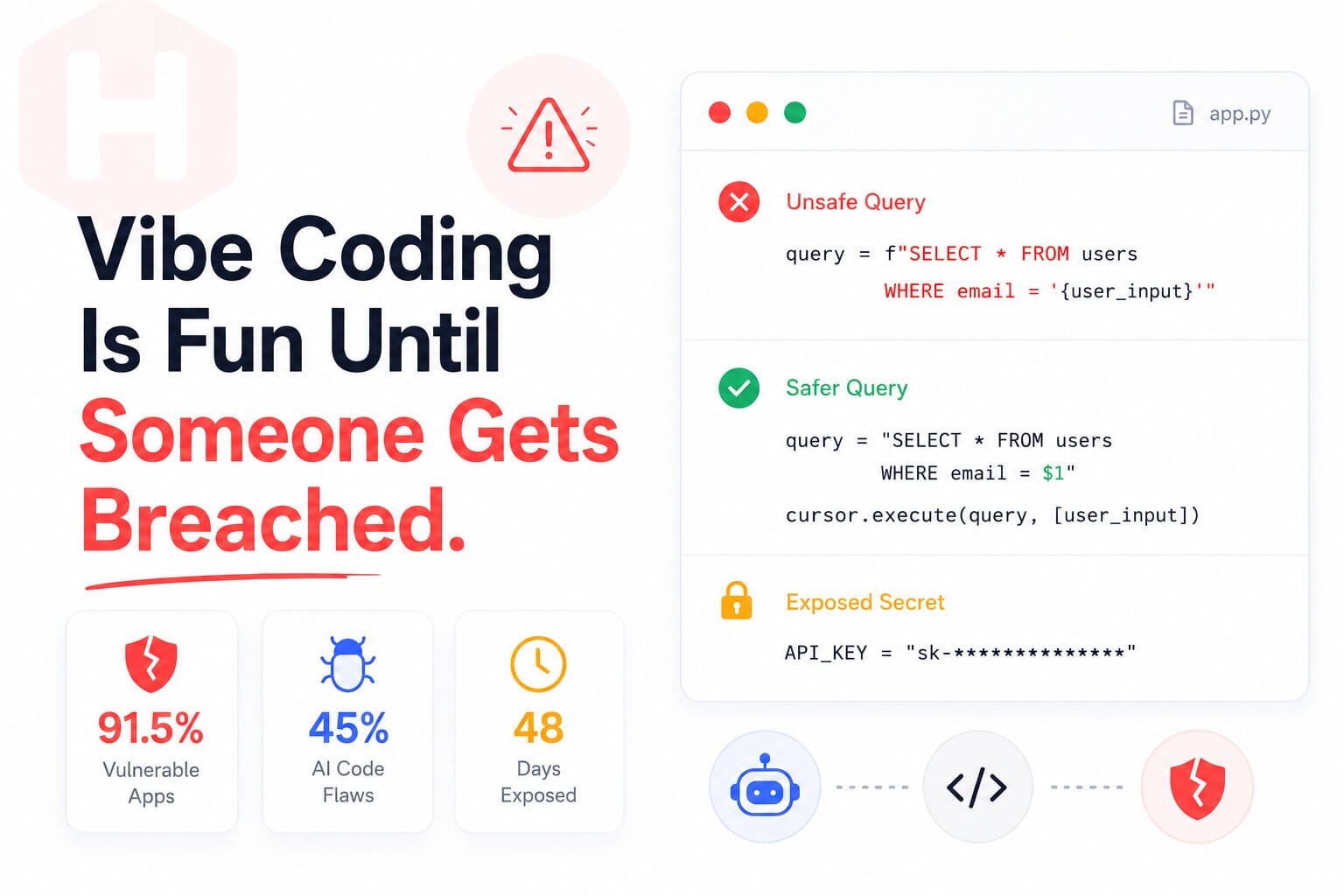

A first-quarter 2026 scan of more than 200 vibe-coded applications found that 91.5% contained at least one vulnerability traceable to AI hallucination (vibe-eval.com, Q1 2026). Not apps built by careless people — apps built the way most people build with these tools right now. That number alone should settle the "is this actually a problem" debate.

The more precise framing: AI generates code that works. Security is not the same thing as works. Vibe coding optimises hard for works and treats everything else as someone else's problem. That someone else is usually you, six months later, explaining to users why their data was exposed.

This is not the same as using Copilot — the distinction matters

Context firstTraditional AI coding assistants — Copilot, Codeium, tab completion — are suggestion tools. You're still the author. You have context, you accept or reject completions, you understand what you're shipping. That's a fundamentally different relationship than what Cursor in agent mode, Lovable, Bolt, v0, or Replit Agent are doing.

With vibe coding you describe the outcome and the AI makes every implementation decision — which packages, how to structure auth, how to query the database, what to validate, what to skip. You review whether it runs. You often can't easily review whether it's secure, because you didn't write it line by line and you can't trace why any particular decision was made without reading through code that is not written the way you would have written it.

Gartner projects 60% of all new code written in 2026 will be AI-generated. The platforms enabling that shift have seen adoption surge well beyond early developer audiences into product teams, founders, and non-technical builders who are shipping to production. A lot of that code is going to real users.

What happened at Lovable — and why it's everyone's problem, not just theirs

The case studyLovable is by several measures the fastest-growing software startup in recent history. $6.6 billion valuation. 8 million users. A product that genuinely does what it says. I'm not here to tell you Lovable is bad software. I'm here to document what happened when shipping velocity met security at scale.

Over 48 days in early 2026, a BOLA vulnerability — Broken Object Level Authorisation, meaning users could access other users' data — sat open across projects built on the platform. Someone filed a bug bounty report. The ticket was closed without proper escalation. The fix went out for new projects but not the thousands of existing ones already exposed. What got exposed: source code, database credentials, AI chat histories, and personal data of thousands of users.

The public response cycled through denial, deflection, blaming the documentation, blaming the bug bounty partner, and a partial apology — within a single day. The cycle is familiar because the pattern is not unique to Lovable. It's structural to a market that rewards growth and measures speed.

The CVE count from AI-generated code went from six in January 2026 to thirty-five in March, according to Georgia Tech's SSLab Vibe Security Radar project — and their researchers note that what gets formally disclosed is likely a fraction of what actually exists in production systems.

Eight things AI does wrong — consistently, without being told to stop

From 1,430+ app scansNot hypothetical. From scanning 1,430+ real AI-built applications and finding 5,711 real vulnerabilities. This is what the model does when you don't specifically tell it not to.

Why it keeps happening — and why it's not really about careless developers

Root causeI want to push back on the implied framing that vibe coding is dangerous because people are being stupid. Most of them aren't. The problem is structural.

AI models learn from what existed. What existed includes decades of Stack Overflow answers that prioritised working code over secure code, tutorials that skipped input validation because it complicated the example, and open-source projects where security was going to be addressed in the next version. The model reproduces those patterns because they were common and because they produce functional output — which is the objective it was trained to optimise for.

The second issue is that AI optimises for the objective you give it. "Build me a login system" is a functionality objective. The model builds a login system that logs users in. It does not build a system that resists credential stuffing, handles session expiry correctly, uses constant-time password comparison, or logs failed attempts — unless you explicitly require those things. Security requirements that aren't stated simply don't exist in the output.

What the vulnerable code actually looks like — so you recognise it when you see it

Real patternsAbstract warnings are easy to skip. Here's what these vulnerabilities look like in code you might actually have shipped.

SQL injection — the AI's default move

// Looks fine. Works fine. Terrible in production. const query = `SELECT * FROM users WHERE email = '${userEmail}'`; const result = await db.query(query); // Attacker input: ' OR '1'='1' -- // Your database returns every user record. // This is not a theoretical attack.

// Prompt: "always use parameterised queries, never interpolate input" const query = 'SELECT * FROM users WHERE email = $1'; const result = await db.query(query, [userEmail]); // User input is data. Not SQL. The injection attempt does nothing.

The auth guard that protects nothing

// AI builds this. Looks right. Isn't. const ProtectedRoute = ({ children }) => { const { user } = useAuth(); if (!user) return <Navigate to="/login" />; return children; }; // Meanwhile, the API endpoint it calls: // app.get('/api/admin/data', async (req, res) => { // res.json(await db.query('SELECT * FROM everything')); // }) // No auth check. None. Call it directly from curl.

Secrets in the bundle — visible to everyone

// AI puts this in your React component const supabase = createClient( 'https://yourproject.supabase.co', 'eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...' ); // This is in your bundled JavaScript. // Open DevTools → Sources → search 'eyJ' // Your database key is right there. // This is happening constantly, right now.

What to actually do — and no, "stop using vibe coding" is not the answer

The practical partThe single most useful mental shift: treat AI-generated code like third-party code from an unknown author. You wouldn't deploy an npm package to production without checking what it does. Apply the same standard to output that came from a model optimised for a different objective than yours.

Start sessions with security requirements — not at the end

Databricks' red team found that security-specific prompts at the start of a session significantly reduced vulnerability rates with minimal impact on code quality. The word start matters. If you add security requirements after generating a bunch of insecure patterns, the model references its own earlier output and propagates the same problems through the "fixes."

Five things to check before anything ships

Not all code deserves the same scrutiny

A colour scheme change and a payment processing flow are not the same risk level. The most effective teams apply tiered scrutiny — lighter review for isolated, low-stakes UI work, explicit security requirements and careful review for anything touching authentication, payments, user data, or external APIs. The question isn't "should we use AI here?" It's "how much scrutiny does this specific piece of code require before it ships?"

The head of the UK's NCSC said at RSA 2026 that the industry needs to build vibe coding safeguards before making the same mistakes it made at the start of the cloud era — shipping fast, worrying about security in the next sprint, and spending years cleaning up preventable breaches. That window is still open. Use it.

Vibe coding is here. It works. It's fast. It also produces vulnerable software by default if you let it. That last part is a choice, not an inevitability — and it's yours to make before the breach is.

Decode JWT tokens without sending them anywhere → Our JWT Decoder runs entirely in your browser. No server, no logging, no data leaving your device. Because that's how security tools should work.

Open JWT Decoder