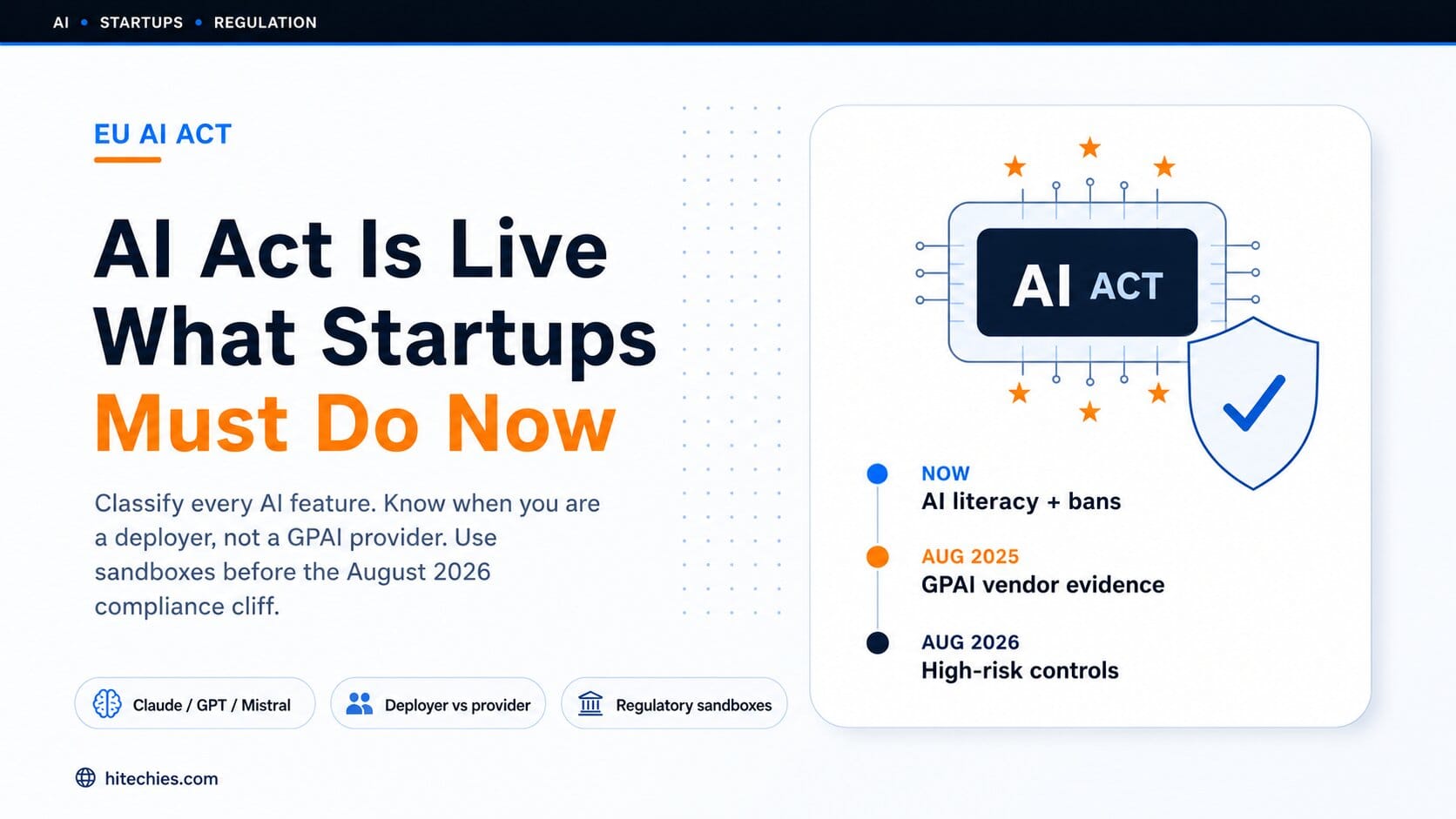

The EU AI Act is not a distant policy debate. It entered into force on 1 August 2024, and obligations have been rolling in ever since. For tech startups, the message is direct: classify every AI feature, document the decision, and build compliance evidence into product development before August 2026 arrives.

This article covers what's already live, what changes in August 2026, how to classify your use case, and what a practical compliance sprint actually looks like — without the legal jargon.

The risk-based model — four categories, very different obligations

Classification firstThe AI Act does not regulate all AI the same way. It uses a risk-based model with four categories. Which category your product falls into determines everything — the documentation you need, the controls you must build, and the fines you face if you get it wrong.

Are you a provider or a deployer? The distinction that changes everything

Your role in the chainMost founders don't need to become general-purpose AI model providers just because they call a third-party LLM API. The key distinction is this:

OpenAI, Anthropic, Google, Mistral, and Microsoft handle GPAI model-provider obligations for their underlying models. Your obligations attach to your own use case, customer interface, data, and role — not to the model underneath.

If your SaaS uses Claude, GPT, or another LLM — your obligations by use case

Quick reference| Use case | Likely posture | What to do |

|---|---|---|

| Internal support drafting Staff use LLM, human reviews before sending |

Deployer | Add to AI inventory. Train staff. Document human review. Control personal data inputs. Keep vendor documentation. |

| Customer-facing SaaS chatbot Answers product questions under your brand |

Transparency | Tell users they're interacting with AI. Log failures. Document intended purpose. Define human escalation. Test for hallucinations. |

| AI content generator Marketing copy, product descriptions, email drafts |

Transparency | Label AI interaction. Keep output provenance. Add review workflow for regulated claims. Disclose AI-generated public-interest text. |

| CV ranking / hiring filter LLM ranks applicants or filters candidates |

High risk | Full high-risk treatment: risk management, data governance, logs, human oversight, technical documentation, applicant information duties. |

| Clinical triage or eligibility Medical diagnostic support, eligibility decisions |

High risk | Do not ship on a generic checklist. Assess medical-device status, high-risk AI status, clinical safety, human oversight, incident reporting. |

What is already live — obligations in force right now

No grace periodThe AI Act's bans on unacceptable-risk practices became enforceable on 2 February 2025. These are not future obligations — they are live law right now.

The same February 2025 milestone brought AI literacy obligations into application. Organisations need appropriate AI understanding among staff and relevant people who operate or use AI systems. This means your product, engineering, and customer-facing teams need working knowledge of what your AI features do and how they can fail.

Rules for providers of general-purpose AI models became applicable on 2 August 2025, including transparency and copyright-related obligations. For startups using third-party models, the vendor's position under the GPAI Code of Practice becomes procurement evidence — ask whether your model provider has signed it, which chapters, and what documentation they provide to downstream customers.

What changes in August 2026 — the cliff most startups are heading toward

⚠ 3 months awayOn 2 August 2026, the majority of AI Act rules come into force. This includes rules for high-risk AI systems listed in Annex III, transparency rules under Article 50, national and EU-level enforcement, and the requirement that member states have at least one AI regulatory sandbox operational.

High-risk AI systems cover use cases that affect health, safety, or fundamental rights — including AI used in critical infrastructure, education, employment, access to essential private or public services, law enforcement, migration and border control, justice, and democratic processes. Whether your system is high-risk depends on its intended purpose, function, specific use, and deployment model.

For startups building high-risk AI systems, compliance has to move upstream into product design. High-risk providers must prepare conformity evidence, risk management documentation, data governance, technical documentation, logging, human oversight controls, accuracy and robustness testing, cybersecurity measures, and post-market monitoring — before the system is placed on the market.

The fines are real — but the bigger risk is market access

Penalty structureThe AI Act's penalty structure is severe. For SMEs and startups, each fine is capped at the lower of the relevant percentage or fixed amount — but that does not remove the need to demonstrate compliance when authorities ask.

A practical AI Act backlog for startups — six moves before August 2026

Action planTreat the AI Act as an operating system for responsible AI delivery. The work belongs in the product backlog, security backlog, data backlog, and vendor-management process — not in a static PDF that no one reads.

What startups should do this month — the two-week sprint

Immediate actionsThe most useful immediate move is a focused two-week sprint.

Week 1: Create the AI inventory. Classify each system. Identify any obviously prohibited or high-risk use cases. Freeze any risky launch until the classification is documented and signed off.

Week 2: Assign evidence owners. Request GPAI and vendor documentation. Add transparency requirements to the product backlog. Draft a board-level AI risk register.

Beyond the sprint, four moves matter most:

Compare AI models before you build → See how GPT-5.5, Claude Opus 4, and Gemini handle the same prompt — free, no account needed.

Open AI Benchmark